Why technical domains like Intellectual Property analysis demand more than statistical prediction from generative AI

In recent years, the rapid diffusion of Natural Language Processing technologies and Large Language Models has profoundly reshaped the way organizations interact with information. These systems have become increasingly accessible, fueling a widespread belief that artificial intelligence can serve as a universal solution, capable of answering any question, supporting any process, and replacing specialized expertise. While this perception reflects the remarkable progress of AI, it also introduces a subtle but significant risk. In highly technical domains such as engineering and Intellectual Property, the democratization of these tools can create the illusion of understanding where, in reality, only statistical approximation exists.

This tension becomes particularly evident when LLMs are applied to patent analysis. Patents are not simply legal documents; they are structured representations of technological knowledge, capturing solutions, constraints, and functional relationships in a highly formalized language. As such, they provide one of the most valuable sources of insight for companies seeking to anticipate innovation trends, monitor competitors, and optimize research and development strategies. In many cases, patent data can reveal technological trajectories years before they become visible in the market, allowing organizations to position themselves strategically and avoid redundant investments.

When accessibility creates an illusion of understanding

However, the very richness of patent data also makes it difficult to interpret. The volume of available documents is enormous, their quality is uneven, and their language is dense and deeply domain-specific. Extracting meaningful insights requires more than the ability to process text at scale; it requires the capability to understand how technologies work, how components interact, and why certain solutions are effective. This is precisely where generalist LLMs begin to show their limitations.

At their core, LLMs operate by identifying patterns in language. When prompted, they transform words into numerical representations, compare these patterns to vast amounts of previously seen data, and generate responses that are statistically likely to follow. The result is text that appears coherent, fluent, and often highly convincing. Yet this process does not involve true comprehension. The model does not understand the physical principles underlying a mechanism, nor does it reason about cause and effect in the way an engineer would. Instead, it predicts what is most likely to be said next!

This distinction, while subtle in everyday applications, becomes critical in technical contexts. Engineering knowledge is not based on linguistic similarity but on functional logic. Two terms may appear unrelated in general language but be closely connected in a technical system, or vice versa. Generalist models, lacking access to structured representations of these relationships, often fail to capture this dimension. As a result, they may produce interpretations that are plausible from a linguistic standpoint but incorrect from an engineering perspective.

Hallucinations and structural fragility in patent analysis

The consequences of this gap are particularly evident in patent-related tasks. Research has shown that LLMs can generate confident but unfounded responses (a phenomenon commonly referred to as hallucination) with error rates ranging between 17% and 33% in legal and patent applications. Such levels of uncertainty are not merely inconvenient; they are potentially dangerous in a domain where decisions influence investments, intellectual property strategies, and competitive positioning.

Beyond hallucinations, deeper structural issues emerge. When analyzing patents, generalist models tend to treat claims as flat text, failing to recognize the hierarchical and logical structure that defines their scope and validity. They struggle to interpret the functional role of components within a system and cannot reliably reconstruct the relationships between them. Moreover, their outputs often lack explainability, offering conclusions without a transparent reasoning process that can be verified or challenged. Even consistency becomes an issue, as similar inputs may lead to different answers, making it difficult to rely on the model for systematic analysis. Perhaps most critically, these systems tend to focus on frequent patterns, overlooking rare but strategically significant signals that often represent the earliest indicators of emerging innovation.

These limitations are not only technical but also economic in nature. As organizations attempt to integrate AI into complex workflows, particularly through advanced agentic systems, the costs associated with development, maintenance, and validation become increasingly significant. At the same time, the benefits are not always clear or measurable, leading to growing skepticism about the long-term sustainability of such investments. This reinforces the idea that AI, when used without a clear methodological framework, may fail to deliver the expected value.

Toward a hybrid model: AI and human expertise

A more effective approach emerges when AI is not treated as a standalone solution but as part of a broader, hybrid model. In this perspective, artificial intelligence complements rather than replaces human expertise, and its capabilities are enhanced through the integration of structured knowledge.

The real shift occurs when AI is enriched with domain-specific knowledge structures that reflect the logic of engineering itself. This is where ontologies play a crucial role.

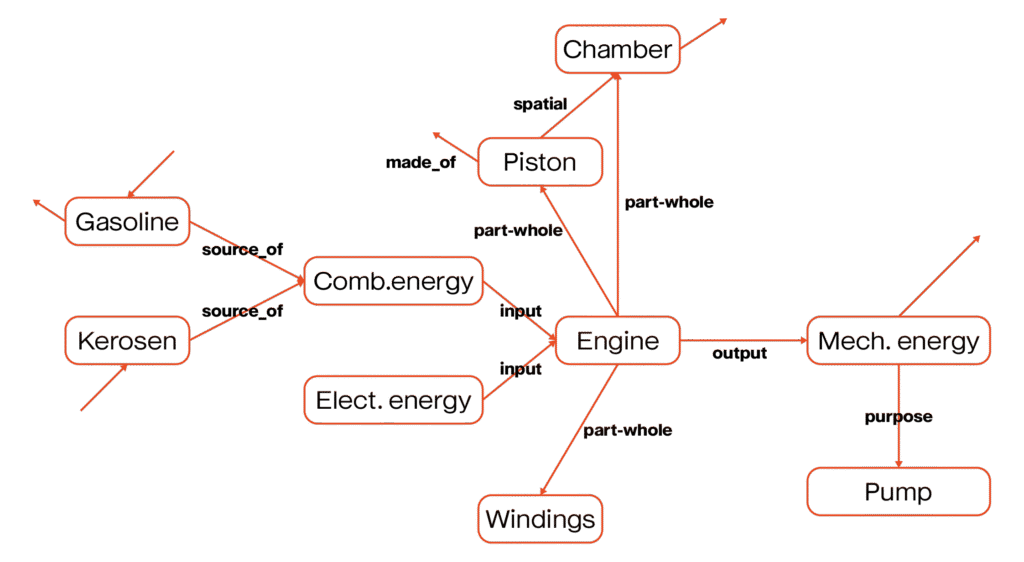

Figure 1: A technical domain ontology

A domain ontology provides a formal representation of the key elements within a technical field, defining not only the entities involved (such as components, materials, and processes) but also the relationships that connect them. These relationships include hierarchies, dependencies, attributes, and cause-effect links, all of which are essential for understanding how a system functions.

By embedding such structures into AI systems, it becomes possible to move beyond purely statistical associations and toward a more meaningful interpretation of data. The model is no longer limited to recognizing patterns in language but can begin to interpret the functional significance of those patterns within a technical context. In other words, it can be guided to “think” in a way that more closely resembles engineering reasoning. This transformation has a profound impact on the quality of analysis, enabling more accurate classification, more reliable identification of relevant documents, and a deeper understanding of technological trends.

Why human expertise remains essential in hybrid AI models

At the same time, the role of human expertise remains indispensable. Even the most advanced systems require validation, interpretation, and contextualization. The human-in-the-loop approach ensures that results are not only technically accurate but also strategically meaningful. Experts can identify inconsistencies, refine the scope of analysis, and determine when the available information is sufficient to support a decision. Rather than diminishing the importance of human judgment, AI amplifies it, allowing analysts to focus on higher-level reasoning while delegating repetitive tasks to machines.

A trustworthy path for AI in patent analysis

Ultimately, the application of AI to Intellectual Property highlights a broader lesson about the nature of technological innovation. The accessibility of powerful tools does not eliminate the need for expertise; on the contrary, it makes it even more critical. Generalist LLMs, despite their impressive capabilities, are not designed to handle the complexity of engineering knowledge on their own. Treating them as universal solutions risks oversimplifying problems that require depth, rigor, and domain-specific understanding.

The path forward lies in recognizing both the potential and the limits of these technologies. By integrating LLMs with structured knowledge frameworks, reliable data sources, and human expertise, organizations can develop systems that are not only efficient but also trustworthy. In the context of patent analysis, this hybrid approach is not just advantageous… it is essential.

References

1 Yoshikawa, N., & Krestel, R. (2025). Do large language models understand patents? Enhancing patent automatic classification via LLM-generated summaries. World Patent Information, 81, 102353. https://www.sciencedirect.com/science/article/abs/pii/S0172219025000201

2 Magesh, V., Surani, F., Dahl, M., Suzgun, M., Manning, C. D., & Ho, D. E. (2024). Hallucination-free? Assessing the reliability of leading AI legal research tools. Stanford Human-Centered AI.

3 Ikoma, H., & Mitamura, T. (2025). Can AI examine novelty of patents? Novelty evaluation based on the correspondence between patent claim and prior art.

4 Covello, J. (2024). Gen AI: Too much spend, too little benefit? Goldman Sachs Global Investment Research.

5 Serratore, G., & Clunis, J. (2025). The integration of artificial intelligence and ontologies: Transformations in knowledge representation and application. NASKO, 10, 1–11. https://doi.org/10.7152/nasko.v7i1.95643