Experts and Superforecasters divided on AI’s future. The challenge is whether we can manage the consequences between progress and apocalypse

What would happen if Artificial Intelligence became so skilled it could accelerate its own research and development? This question is at the heart of one of the most crucial debates of our time. It evokes scenarios of “recursive improvement” that could lead to an “intelligence explosion”, with profound social impacts, as well as hypotheses of unpredictable, potentially harmful effects.

Although the idea of a self-improving AI has become a concrete concern for regulators and developers, until now, little research has attempted to systematically quantify the odds and consequences of such an event. A recent pilot study titled Forecasting the Significance of AI R&D Capabilities: Results of a Pilot Study, by the non-profit research institute METR, delved into this uncharted territory using a methodology known as “judgmental forecasting”.

This research compared the predictions of two distinct groups. On one side, experts with specific experience in the AI field, and on the other, superforecasters. The latter are individuals, real people, with a proven and extraordinary ability to predict future events. They have strong probabilistic thinking and, when put to the test, can statistically demonstrate excellent results in making accurate predictions.

The study’s goal has two fold. The first is to understand if an AI capable of researching itself could lead to an exponential acceleration of technological progress. The second one is whether this progress could trigger extreme events, like global energy crises or other large-scale catastrophes.

An hard forecast

The results of this experiment revealed a surprising dichotomy. On one hand, experts and superforecasters largely agree on the first question. That is, they believe AI research itself will exponentially increase its own capabilities and, just as quickly, be able to provide useful technological breakthroughs. In fact, both groups believe there is a non-negligible probability (a median of 20% for experts and 8% for superforecasters) that the pace of AI progress could at least triple by 2029. Even more significantly, they agree that AI achieving autonomous R&D capabilities would drastically increase that probability.

Diverging views on risk

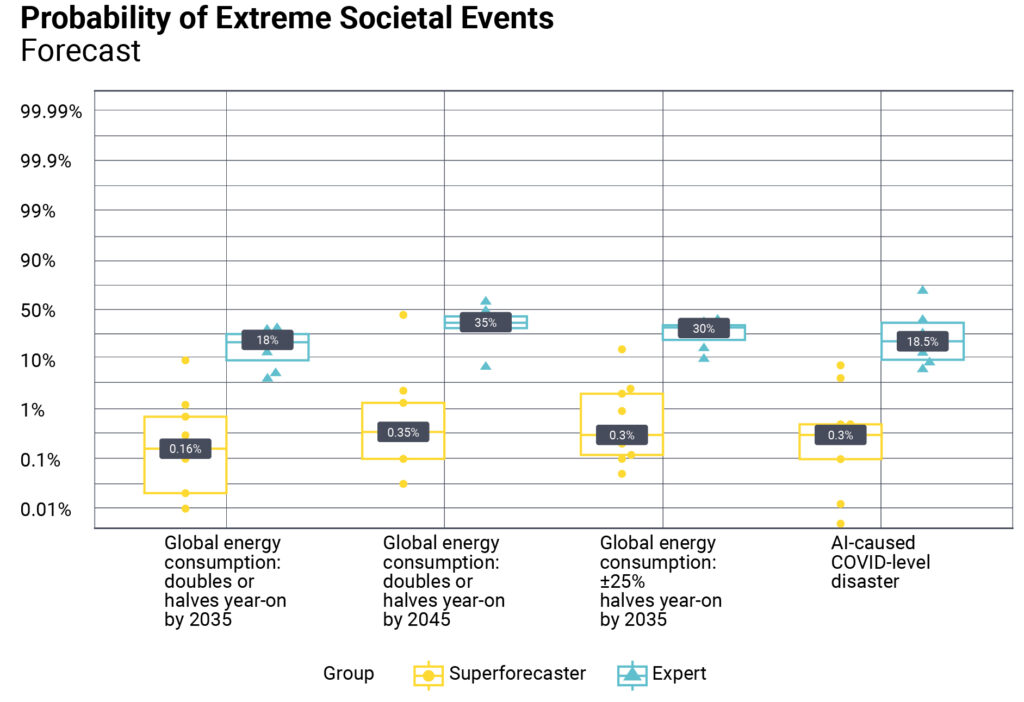

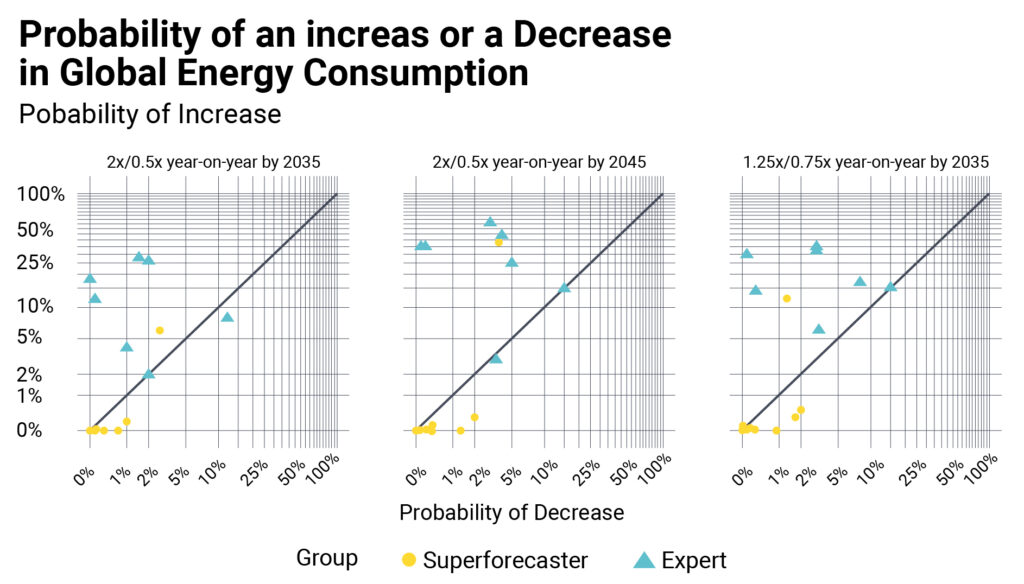

However, it is on the second question that their opinions divide sharply. Experts tend to see an almost direct link between AI acceleration and a dramatic increase in catastrophe risk. In contrast, the superforecasters are much more skeptical. While acknowledging the possibility of rapid technological progress, they don’t believe it would automatically translate into extreme social impacts. Their analysis suggests that technical barriers, physical limits, and, above all, human intervention would act as a brake, preventing the most harmful outcomes.

Quantifying the gap

This divergence is enormous. For an event like the doubling or halving of global energy consumption by 2035, the median probability assigned by experts is two orders of magnitude higher than that of the superforecasters (18% versus 0.16%).

nally and conditional on a 3x and 10x acceleration in the scale!up of e”ective compute. This change is relative to

a scenario where the rate of AI progress remains similar to that seen between 2018 and 2024. – Source: METR

This fracture in judgment isn’t just a statistical curiosity; it represents the heart of the problem. The study highlights that the real divergence isn’t about whether AI will get smarter faster (because it will), but about whether we will be able to manage the consequences. For the superforecasters, technological acceleration doesn’t seem to be the crucial factor in determining a catastrophic future. This suggests that the AI safety debate should perhaps focus less on the speed of progress and more on control mechanisms and real-world barriers.

The report Forecasting the Significance of AI R&D Capabilities: Results of a Pilot Study and its findings are just one of many important studies attempting to forecast AI’s impact on our lives. The study has limitations that can be addressed in future research. The first is the very small sample size: only 8 experts (just 4 of whom had specific skills in AI and Machine Learning) and 9 superforecasters participated. This small group makes the results more sensitive to personal predictions and may underestimate potential “low-risk” scenarios. However, the study’s results are a key prompt to more deeply investigate the assessments of experts and superforecasters regarding the future impacts of AI and its progress.